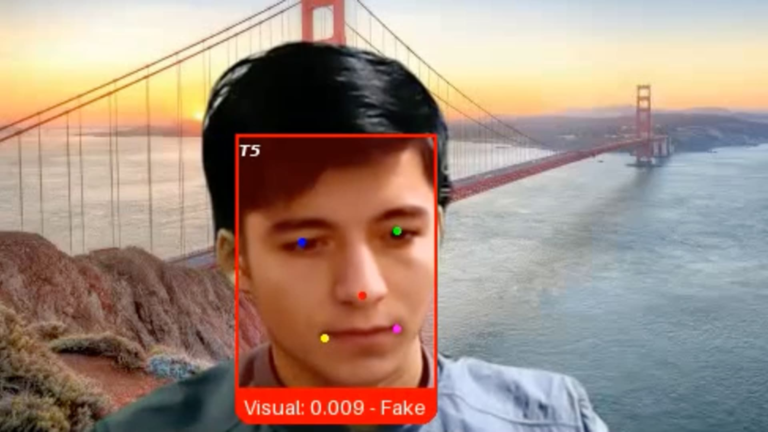

An image provided by Pindrop Security shows a false job candidate that the company has nicknamed “Ivan X”, a crook using Deepfake AI technology to hide its face, according to the CEO of Pindrop, Vijay Balasubramaniyan.

Gracieuse: Pindrop security

When the vocal authentication startup Pindrop Security published a recent job opening, a candidate stood out from hundreds of others.

The applicant, a Russian coder named Ivan, seemed to have all the right qualifications for the role of senior engineering. However, when he was interviewed on a video last month, Pindrop recruiter noticed that Ivan’s facial expressions were slightly disabled with his words.

This is because the candidate, which the company has since nicknamed “Ivan X”, was a crook using Deepfake software and other AI generative tools in order to be hired by the technological company, said the CEO and co-founder of Pindrop, Vijay Balasubramaniyan.

“Gen ai scrambled the line between what it is human and what it means to be machine,” said Balasubramaniyan. “What we see is that individuals use these false identities and these false faces and false voices to ensure a job, even sometimes, going so far as to make an exchange of face with another person who presents himself for work.”

Companies have long experienced pirate attacks in the hope of exploiting the vulnerabilities of their software, employees or suppliers. Now another threat has emerged: work candidates who are not what they say to be, eating AI tools to make photo identifiers, generate job history and provide responses during interviews.

The rise in profiles generated by AI means that in 2028, the whole world 1 of 4 is false, according to the research and advice firm Gartner.

The risk for a company to cause a false job seeker can vary depending on the person’s intentions. Once hired, the impostor can install malware to demand a company ransom or steal its customer data, secrets or commercial funds, according to Balasubramaniyan. In many cases, misleading employees simply collect a salary that they could not otherwise say.

“Massive” increase

Cybersecurity and cryptocurrency companies have seen a recent increase in false job seekers, industry experts told CNBC. As companies often hire for distant roles, they have valuable objectives for bad players, these people said.

Ben Sessser, the CEO of Brighthire, said that he had heard of the problem a year ago and that the number of fraudulent candidates of the candidates had “accelerated massively” this year. His company helps more than 300 corporate customers in finance, technology and health care to assess potential employees in video interviews.

“Humans are generally the weak bond of cybersecurity, and the hiring process is an intrinsically human process with many transfers and many different people,” said healing. “It has become a weak point that people try to exhibit.”

But the problem is not limited to the technology industry. More than 300 American companies inadvertently hired impostors with links with North Korea for computer work, including a large national television network, a defense manufacturer, a automotive manufacturer and other fortune companies 500, said the Ministry of Justice in May.

Workers have used American -stolen American identities to apply for remote work and deployed remote networks and other techniques to hide their real locations, the DoJ said. They finally sent millions of dollars of wages to North Korea to help finance the country’s arms program, said the Ministry of Justice.

This affair, involving a ring of alleged catalysts, including an American citizen, revealed a small part of what the American authorities have said is a foreign network abroad of thousands of IT workers with North Korean links. The MJ has since deposited more cases involving North Korean IT workers.

A growth industry

False job seekers do not release, if the experience of Lili Infante, founder and chief executive officer of Cat Labs, is an indication. His Florida-based startup is at the intersection of cybersecurity and cryptocurrency, which makes him particularly attractive to bad actors.

“Whenever we list a job publication, we get 100 North Korean spies landing there,” said Infante. “When you look at their curriculum vitae, they seem incredible; they use all the keywords for what we are looking for.”

Infante has said that his company is based on an identity verification company to eliminate false candidates, part of an emerging sector which includes companies such as President, Jumio and Socure.

A desired poster of the FBI shows suspects that the agency said that IT workers in North Korea, officially called the Democratic People’s Republic of Korea.

Source: FBI

The employee’s false industry has expanded beyond North Koreans in recent years to include criminal groups in Russia, China, Malaysia and South Korea, according to Roger Grimes, veteran consultant in computer security.

Ironically, some of these fraudulent workers would be considered the best effective in most companies, he said.

“Sometimes they will hurt the role, then sometimes they perform them so well that I had a few people tell me that they were sorry that they should let them go,” said Grimes.

His employer, Cybersecurity Company Knowbe4, said in October that he had inadvertently hired a North Korean software engineer.

The worker used AI to modify a photo, combined with a valid but stolen American identity, and has made checks of history, including four video interviews, said the firm. It was only discovered after the company found a suspicious activity coming from its account.

Fight Deepfakes

Despite the DoJ affair and some other medical incidents, hiring managers in most companies generally ignore the risks of false candidates, according to Sessser de Brighthire.

“They are responsible for the strategy of talents and other important things, but being on the front line of security has not been one of them,” he said. “People think they don’t do it, but I think it is probably more likely that they don’t realize that it happens.”

As the quality of Deepfake technology is improving, the problem will be more difficult to avoid, said healing.

As for “Ivan X”, Balasubramaniyan de Pindrop said that the startup had used a new video authentication program he had created to confirm that he was Deepfake fraud.

While Ivan claimed to be located in western Ukraine, his IP address said that it was in fact thousands of kilometers in the East, in a possible Russian military installation near the North Korean border, the company said.

Pindrop, supported by Andreessen Horowitz and Citi Ventures, was founded more than ten years ago to detect fraud in vocal interactions, but could soon rotate video authentication. Customers include some of the largest American health banks, insurers and health companies.

“We are no longer able to trust our eyes and ears,” said Balasubramaniyan. “Without technology, you are worse than a monkey with a random draw.”